Search reveals useful dimensions in latent idea space

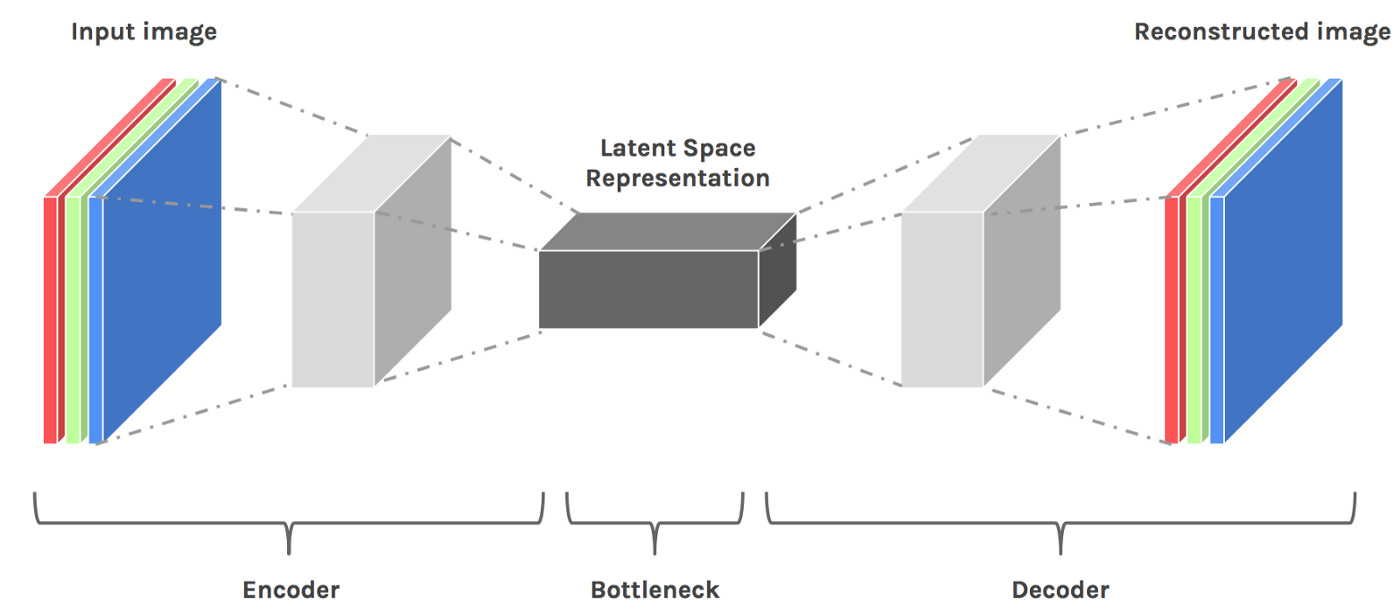

When you train a deep learning system, it builds up a a latent space. A latent space is a hyperdimensional space where things that are similar along some dimension are near to each other along that dimension.

Latent space is like a map you can use to correlate things with other things, along many dimensions.

You can scrub through this latent space to discover all kinds of weird and wonderful interpolations between characteristics. For example, you can generate new chairs by scrubbing through the latent space between chairs.

…or perhaps invent new arcana by scrubbing through the latent space between tarot cards.

What if we imagined ideas as a kind of hyper-dimensional latent space? Instead of seeing knowledge as linear narrative, or a graph of hyperlinks, what if we pictured it as a cloud of associations across many dimensions?

Imagine your notes as a stack of papers. Next to that stack of papers, you have a long red string, with a needle at one end. To perform a search, you leaf through each of the pages. Whenever you find a match for your query, you poke the needle through, right where the match exists on the page, and you thread the page onto the string. You’re methodical, so you order the pages on the string by relevance, taking into account how close the match is, and where it appears on the page. Now, stretch out the red string. You’ve arranged your ideas along one dimension of latent idea space. Imagine doing this for all possible dimensions at once.

Search lets us explore latent idea space. We can use questions as a lens to choose a dimension in latent idea space, and construct an answer.

Any sufficiently advanced search is indistinguishable from a hyperlink. A search query is kind of like a hyperlink that can be constructed on the fly. Our question forges a link between notes, just-in-time. When a search becomes extremely specific, it functions like a coordinate to a specific point in latent idea space.

Where a book offers one narrative linearization of a topic, and hypertext offers a static graph of relationships, we might see search as a dynamic graph. Search results might be seen as a kind of hyperdocument that is constructed just in time, in response to our question.

Search is a signal of intent. It reveals the useful dimensions within latent idea space. By asking the question, we are signaling which possible connections, in the space of all possible connections, matter to us. The questions themselves are valuable and can be used to close feedback loops between our past and future self. Questions are also knowledge, and can be used to construct both answers, and questions.