Pond brains and GPT-4

Stafford Beer and Gordon Pask were building a pond that thinks. Their biological computing project set out to build ecosystems with inputs and outputs, that could function as computers.

Beer also reported attempts to induce small organisms—Daphnia collected from a local pond—to ingest iron filings so that input and output couplings to them could be achieved via magnetic fields, and he made another attempt to use a population of the protozoan Euglena via optical couplings. Beer’s last attempt in this series was to use not specific organisms but an entire pond ecosystem as a homeostatic controller.(Pickering, 2004. The Science of the Unknowable.)

According to [Stafford] Beer, biological systems can solve these problems that are beyond our cognitive capacity. They can adapt to unforeseeable fluctuations and changes. The pond survives. Our bodies maintain our temperatures close to constant whatever we eat, whatever we do, in all sorts of physical environments. It seems more than likely that if we were given conscious control over all the parameters that bear on our internal milieu, our cognitive abilities would not prove equal to the task of maintaining our essential variables within bounds and we would quickly die. This, then, is the sense in which Beer thought that ecosystems are smarter than we are—not in their representational cognitive abilities, which one might think are nonexistent, but in their performative ability to solve problems that exceed our cognitive ones.

(Pickering, 2010. The Cybernetic Brain)

It sounds wild, but when you take a step back and reflect, it makes a kind of deep sense. Each participant in an ecosystem—plants, algae, bacteria—reacts and adapts to changes in inputs. If you squint, you might see them as transistors, or really, as whole computers, networked together.

Ecosystems compute, and they are smarter than we are—not in their representational cognitive abilities, but in their performative ability to solve problems that exceed our cognitive ones.

What is intelligence? What kinds of things are intelligent? Choose all that apply:

A person

A parrot

An octopus

A frog

A slime mold

An ant colony

A pond

The answer offered by cybernetics is “all of the above”.

To some, the critical test of whether a machine is or is not a ‘brain’ would be whether it can or cannot ‘think.’ But to the biologist the brain is not a thinking machine, it is an acting machine; it gets information and then it does something about it.

(W. Ross Ashby)

All of these things are intelligent. They get information and do something about it.

Evolution is a pragmatist. It only cares about actual behavior. It is the getting and the doing that matter. The how can be approached in many different ways, through pheromone trails, or trophic networks, or nerve nets, or brains, or symbolic representation. Evolution doesn’t care. If you can get information and do something about it, you are thinking.

This biological view of thought embraces many nonhuman ways of thinking that are difficult for us to grasp. Humans are representational thinkers. We consciously construct symbolic representations to understand and to act. As representational thinkers, we are tempted to say “this pond does not think”, because it does not “have” thoughts like we do. That is, the pond does not use symbolic representations to consciously reflect upon what it is doing.

But what if we tried to operate the pond through symbolic representation? I mean fully describe it in words, in such complete detail that we had a functioning pond? All the plants, the bugs, the algae, the balance between them, every material flow, every trophic relationship, each cell of each organism, the water, the motion of the molecules… We quickly find that our symbolic representation is not up to the job.

Representation is flattening. It compresses our extremely high-dimensional reality into little symbols—a, b, c, d—in order to pipe them through our conscious mind for further processing. It’s a flexible tool, but has extremely limited bandwidth and throughput, for obvious reasons. Good for people problems, maybe not for pond problems. Ecosystems are too high-dimensional for representational thought.

Beer started from this realization: in a world of exceedingly complex systems, for which any representation can only be provisional, performance is what we need to care about. (Pickering, 2010)

If we take on Stafford Beer’s perspective, we start to see that people, parrots, and ponds all construct models of our environment, and we use these models to adapt. All of us embody forms of intelligence that evolution has honed to our particular way of being.

By insisting on thought as actual behavior, we gain a respect for nonhuman intelligences that we might otherwise miss or dismiss. There are many ways to get information and do something about it.

So but if a pond can think, what else can think? Where are we going to draw the line?

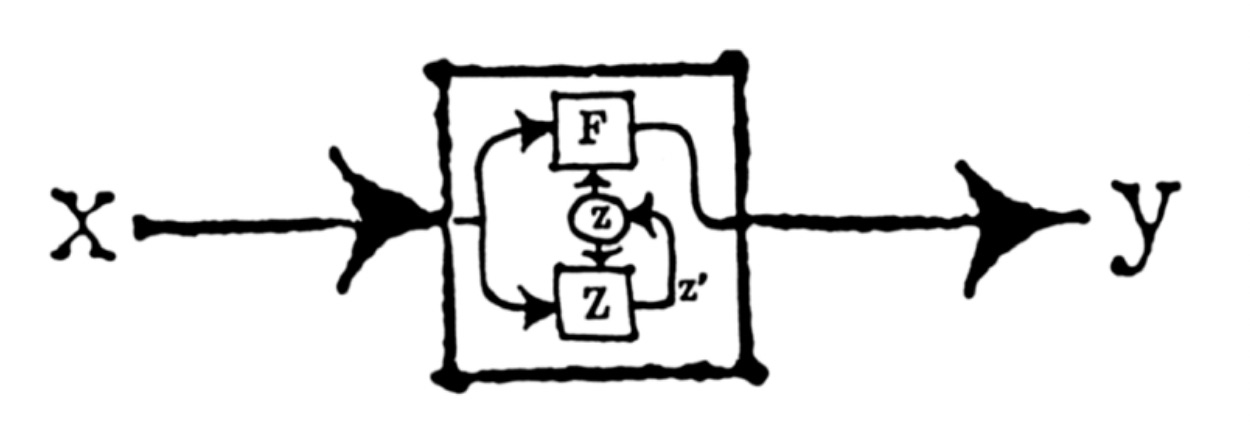

As Norbert Weiner discovered, the minimum requirement for intelligent goal-seeking behavior is feedback. A loop. That’s it.

Feedback means that each step becomes the integration of all previous steps. A memory, or model, of the system’s interaction with the environment accumulates, allowing future actions to be influenced by past experiences.

So, through this lens we might say a thermostat is intelligent. It gets information and does something about it.

What else? Suddenly, we see that we embody many forms of intelligence outside of our brains. Our DNA, for example, encodes the memory of millions of years of experience within our environment. Every life lived by our ancestors, all the way back to that first single cell. Each recursive step in the game of life, a gift to us.

We also see that rainforests and markets and cities, and even our earth’s climate system embody complex forms of intelligence.

Feedback can be found nearly everywhere, so intelligence can be found nearly everywhere.

LLMs aren't actually thinking. They're just predicting the next token.

It is common to encounter this claim in the discourse around LLMs. Is this right?

We are faced with a surprising fact. If you predict the next token at large enough scale, you can generate coherent communication, generalize and solve problems, even pass the Turing Test. So is this actually thinking?

In the face of this Copernican moment, I feel Stafford Beer’s pond brain offers us some wisdom.

“It’s just predicting the next token.”

”It’s just following pheromone trails.”

”It’s just a PID loop.”

”It’s just a pond.”

Eppur si pensa.

An LLM gets information and does something about it.

Special thanks to for feedback and inspiration. Did you know there are whole fields of natural computing and unconventional computing that continue to pursue the dream of a pond brain?