LLMs break the internet. Signing everything fixes it.

LLMs break the internet. The going rate for GPT-4 is $0.06 per 1000 tokens, or about $0.00008 per word. New open source models like Dolly and StableLM will drop costs even further, and without the content restrictions.

Thought has never been so cheap. Creative expression has never been so accessible. Also spam, phishing, harassment mobs, and mass influence ops have never been so cheap, so accessible.

You thought the internet was a mess before? Get ready for bots that beat the Turing test, synthesize your voice, generate fake social consensus at scale. We’re seeing the beginnings of this already. Expect a tidal wave of spam, identity theft, phishing, ransomware over the next 36 months.

The Dead Internet Theory wasn’t wrong, just early.

Sign everything

So what do we do about this world we are living in where content can be created by machines and ascribed to us? I think we will need to sign everything to signify its validity. When I say sign, I am thinking cryptographically signed, like you sign a transaction in your web3 wallet.

(Fred Wilson on AVC, 2022. “Sign Everything”)

How can I know this is you, and not an AI? Because you’ve signed everything with a cryptographic key that only you control.

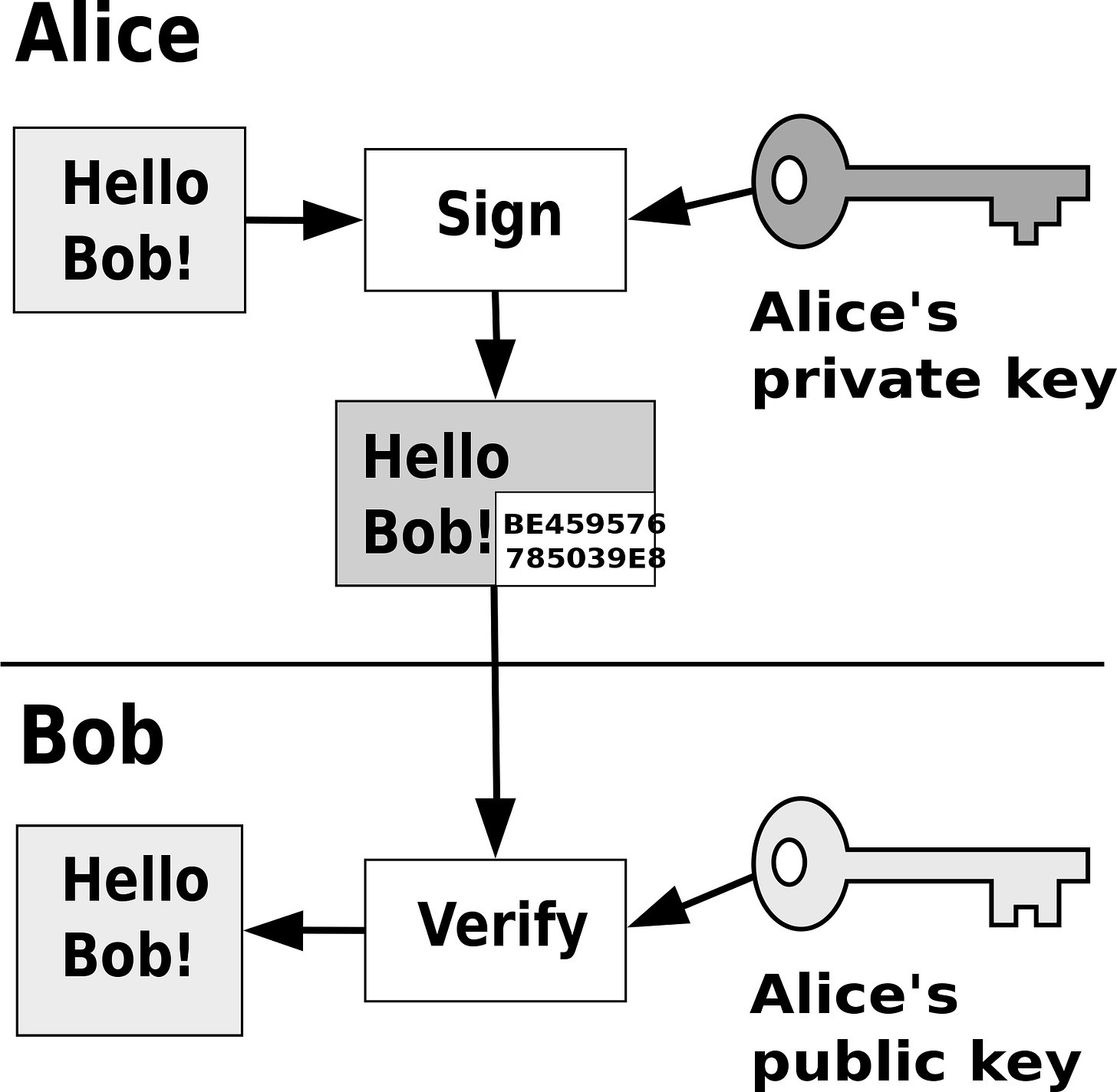

Signing means using public key cryptography to implement cryptographic signatures, one of the basic building blocks of secure computing. You sign your messages with your private key. I use your public key to verify your signature.

The magic behind this trick is a one-way cryptographic hashing function. It takes your content and generates a corresponding string of numbers and letters, unique to your content and key. These arcane characters don’t mean much to you or me, but to a computer, they offer unforgeable proof that the document was signed with your specific key. Cryptographic signatures are the fundamental mechanism we use to guarantee authenticity and non-repudiability in software.

Give me a firm spot on which to stand, and I shall move the earth. (Archimedes)

AI can fake just about any kind of social proof, but AI cannot fake a cryptographic signature. This makes cryptographic signatures a firm foundation around which we can rebuild trust.

What does this look like in an app?

You’ve seen cryptographic signatures before, but maybe didn’t know it. You know that little lock icon?

It turns out the web cryptographically signs some things over HTTPS, and if you click the lock, you can find a long string of numbers and letters—a cryptographic signature. Here’s the cryptographic signature for my website.

Of course, I don’t need to know or care about this long string of numbers. Numbers are for computers. I’m a person. I look for the lock. Once we have provably-secure communication, it becomes possible to build a secure and friendly UI on top.

So, but if the web uses cryptographic signatures, what’s the problem? Aren’t we done here? Not quite. See, the web signs at the wrong layer.

On the web, servers have signatures, people don’t. This is ok, but it answers the wrong question. In our post-LLM world, the question is “Who is this? Can I trust them?”

I don’t have a signature, you don’t have a signature. That means we can be spoofed. To fix the dead internet, we need to rebuild trust around people.

Castle-moat doesn’t cut it.

We need zero-trust security.

Websites have cryptographic signatures. People don’t. Why? Because the web’s security model is fundamentally feudal. From Weird web3 energy:

Each app builds a moat and walls to protect the hoard of data its peasants produce. This is both for reasons of protection, and power. How did this condition emerge?

Before the advent of the internet, apps ran on your computer and saved data to your computer. The internet flipped this around. Web software ran remotely on server computers, and saved data remotely to giant databases.

Now our data was on someone else’s computer. This introduced a host of challenges for anything that required trust. Websites had to roll their own solutions for identity, access control, data protection, and payments. Browsers built walls around websites to protect this valuable data from exfiltration. Websites became the boundary of trust, and apps followed the same model.

The centralized castle-wall approach to security and moderation worked well enough in the early days of the web—a web of small forums, blogs, and mods. Yet, as the internet has scaled, we’ve begun to push up against the limits of this centralized paradigm. Public social media is beset by memetic epidemics, bots, targeted harassment, troll factories, influence ops, click farms, fake reviews... And all of this was before LLMs. What happens when we 10x, 100x, 1000x the volume? The castle wall no longer affords much protection.

Ashby’s Law of Requisite Variety: If a system is to be stable, the number of states of its control mechanism must be greater than or equal to the number of states in the system being controlled.

Only variety can absorb variety.

Centralized approaches to moderation just don’t scale. They don’t have the requisite variety to actually moderate. There’s a reason we no longer live in castles, after all. Castles only work in the small.

We’ve actually gone through this kind of transition before. In the early days of networking, when we first started connecting computers, we began by creating trusted networks. These networks were surrounded by a castle wall, called a firewall. Devices inside the firewall were trusted. Devices outside were not.

The problem was, as networks scaled, the boundaries of the castle wall had to be continually redrawn. The number of internet applications grew, opening up more and more backdoors through the castle wall. More people needed access inside the castle from more places. This castle wall approach did not have requisite variety to match reality. Ultimately the castle wall was so large, with so many doors, it no longer afforded much protection.

The answer to this dilemma was zero-trust networking. Forget the castle wall entirely. Rebuild trust around people.

We did this with networks. Now it’s time to do the same with sign-ins, authorization, identity, reputation. Sign everything, with self-sovereign keys that only you control.

Building webs of trust

The first best thing that self-sovereign cryptographic signatures give us is a way to build trusted networks together. I know you, you know me. We exchange keys. From then on, I know it’s you I’m talking to, not a bot impersonating you.

Maybe you have a friend. I trust you to vouch for your friend’s key. You trust me to vouch for mine. Each of us trade keys for the folks we know. As the network grows, we begin to build up address books of trusted keys. Some keys may even be vouched-for by multiple trusted friends, increasing trust.

As time goes on, you will accumulate keys from other people that you may want to designate as trusted introducers. Everyone else will each choose their own trusted introducers. And everyone will gradually accumulate and distribute with their key a collection of certifying signatures from other people, with the expectation that anyone receiving it will trust at least one or two of the signatures. This will cause the emergence of a decentralized fault-tolerant web of confidence for all public keys.

(Phil Zimmerman, 1994. “PGP User Manual”)

This decentralized approach to security is called a web of trust. It feels intuitive, because it mirrors the way we form relationships in real life.

Using webs of trust, we can build private and secure friend-to-friend networks, like invite-only Discord servers, but peer-to-peer and decentralized.

Webs of trust are perfect for Dunbar-scale social. As the public internet becomes a dead internet, we can retreat to safe cozywebs of trust, protected from bot swarms by our secure cryptographic signatures.

Scaling webs of trust

This is a start. However, beyond the Dunbar scale of about 150 people, friend-to-friend trust will begin to break down. You trust me, and you might trust my friend, but what about the friend-of-a-friend-of-a-friend? Trust just doesn’t scale by the transitive property. At a certain scale, you can’t keep track of all the players, so free-riders get away with it. So how might we scale webs of trust together?

Reputation: If you can sum up what others did in the past, then you can alter your actions in response. Reputation transforms trust into a repeated game. Bad actors get a bad reputation. Free-riding stops working. The winning move becomes win-win cooperation. Reputation is why you feel comfortable booking an AirBnB and sleeping in a stranger’s house, or catching an Uber and hopping into a stranger’s car.

So, this is another advantage of self-sovereign public keys. We can layer on reputation at the protocol layer, for example, by summing up the public edits a key has made. I don’t know you, but can I trust your key? Yes, it has a good reputation.

Importantly, it’s even possible to attach reputation to keys, while supporting anonymity, pseudonymity, and multiple identities. I don’t need to know who you are, just that you’re ok. This can be done through a trusted intermediary, or better yet, we might use Zero-knowledge proofs to trustlessly communicate reputation without revealing identity.

So self-sovereign keys, webs-of-trust, and reputation get us most of the way back to a functioning internet in the age of LLMs. They might not prove bot-or-not, but they prove trustworthiness, and that’s what matters. But we can still go a step further…

Attestation: when everyone has a key (or multiple keys), it’s also possible to attach attestations to those keys. Think various forms of “bluecheck”, signed and published to a decentralized source of truth:

Proof-of-humanity. The big idea here is to answer the question of bot-or-not, without actually having to reveal anything about the person’s identity. You can get good-enough proofs-of-humanity through in-person events, anonymized identity, anonymized biometrics, webs of trust, or combinations of the above. Here again, zero-knowledge proofs make it possible to prove the attestation without revealing identity.

Composable moderation. You can also attach tags to keys, allowing for decentralized, bottom-up discovery and moderation. Anyone can create and apply tags (“spam”, “nsfw”). Users and services can choose how these tags filter or alter the display of content. Bluesky is taking this approach to moderation. I’m excited by their work here.

Importantly, public keys are part of the open protocol layer, meaning permissionless innovation becomes possible. New moderation tools and trust protocols can emerge from the bottom-up. Some might be silly, others profound. We’ve barely explored what trust can look like over the network.

Decentralizing moderation is the only way for people to maintain the requisite variety needed to freely define their experience. This is an evolutionary arms race between consuming and conserving attention. Permissionless innovation in content generation demands permissionless innovation in content moderation. To find balance, positive feedback (LLMs) and negative feedback (moderation) must co-evolve together, at the same pace.

Noosphere fixes this

All of this sounds a bit like Noosphere, and that’s no accident. Noosphere is a decentralized protocol for thinking together with people and AIs.

In Noosphere, you…

Sign everything with a key that only you control.

Build webs of trust by following others and exchanging keys.

Layer any reputation and attestations on top.

At the core, Noosphere is a worldwide decentralized graph, made up of smaller public and private graphs, called spheres. Your sphere is signed with your key, and contains your data, and an address book of keys you trust. You can follow other spheres, they can follow you. When you do, you exchange keys, building webs of trust.

The combination of open webs-of-trust, reputation, and attestation create the ground conditions for positive-sum collaboration. Communication between keys becomes a repeated game. Bad actors get a bad reputation. Free-riding stops working. The winning move becomes win-win cooperation.

Not just collaboration between people, but also between people and AIs. We’re building Noosphere because we imagine a world where people and AIs think together, to reach new creative heights.

With the right network, people and AIs can be smarter together than we are separately. Not the dead internet. The becoming-alive internet.