Composability with other tools

A thought-provoking tweet:

Interoperability, exchange, and composition are some of my primary motivations for building Subconscious. Why isn’t interoperability more common between tools for thought? Why is it that most of our tools are silos? How did we get here?

One-off API integration doesn’t scale

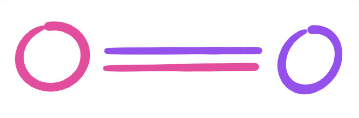

Imagine we have two apps, Magenta and Purple. Each has a REST API. So the Magenta devs write an integration for Purple’s API, and Purple’s devs write an integration for Magenta’s API. 2 apps, 2 API integrations.

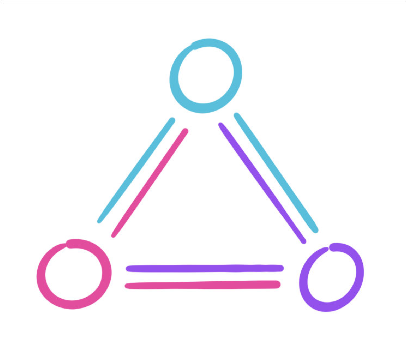

A third app enters the scene. Both Magenta and Purple’s developer teams write an integration for Teal’s API. Teal’s developers write integrations for Magenta and Purple. We’re up to 3 apps, 6 integrations.

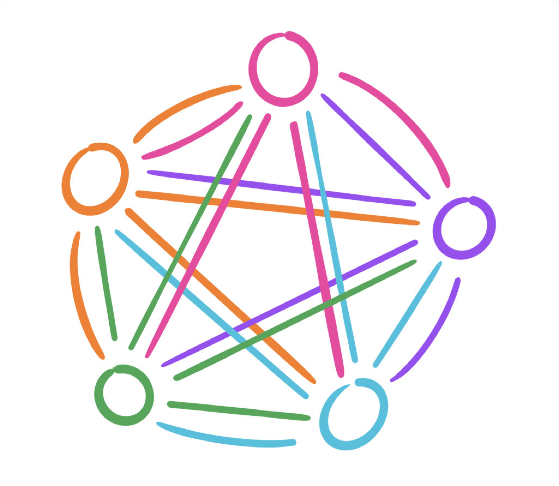

Now we’re up to 5 apps, and 20 integrations.

With one-off API integrations, every app must be connected with every other app in both directions. The number of integrations required for interoperability is equal to the maximal number of edges in a directed graph, or n * (n-1). Adding one more app, for a total of 6, means going from 20 to 30 integrations. 10 apps is 90 integrations!

There are other challenges as well:

Imagine any of these apps needs to change its API. Now every single other app in the network needs to change its integration code. Everyone in the network has to coordinate, because everyone in the network has to implement everything.

Because integrations are part of the application code, the developer of the app is responsible for integrating that app with other tools. This mostly limits integrations to the set of workflows that an app’s product team can imagine and build under their particular set of incentives.

Web-facing APIs must be permissioned and rate-limited, which means they are controlled. They run on someone else’s computer, and when you use a web API you are visiting someone else’s private property, which you have been granted permission to visit. The emergent result is that web APIs are more often rented than owned. Deals may have to be worked out to gain access.

This makes the emergence of widespread interoperability extremely unlikely on the web.

In practice, it moves interoperability out of the realm of the technical, and into the realm of b2b deals. With all these coordination costs, it often makes more sense for a large product like Salesforce or Facebook to just buy and absorb other products, and that is just what they do.

Protocols are hubs

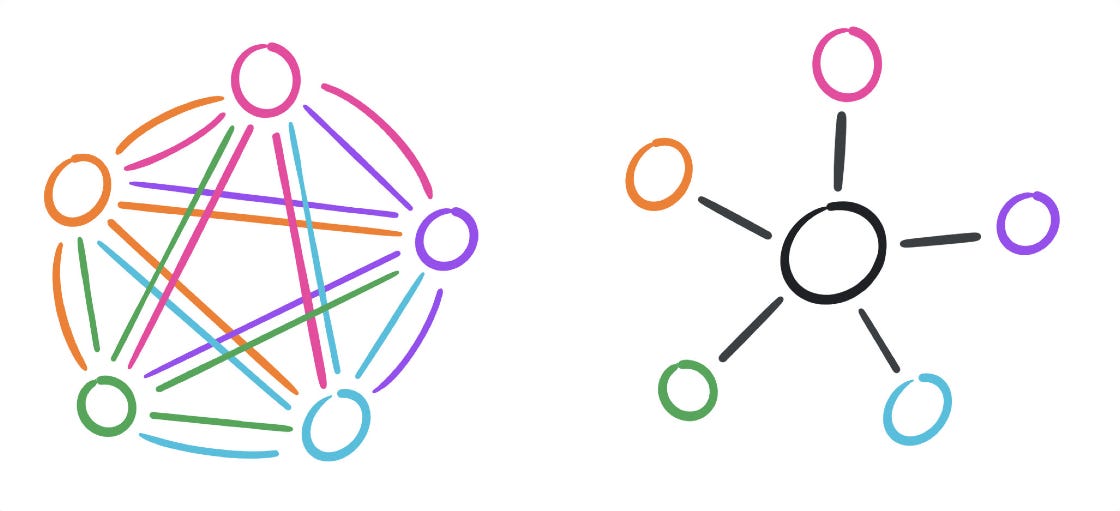

Instead of every app integrating with every other app’s bespoke API, what if they communicated through a shared protocol?

The protocol acts as a hub in the network, cutting the number of connections necessary for full interoperability from n * (n - 1), to just n. The number of integrations scales 1:1 with the number of apps.

Better yet, none of these apps have to know anything about each other. All they need to know is the protocol. This makes the set of possible workflows between apps an open set.

If you can make hubs the default choice, interoperability is very likely to emerge.

Files make interoperability the default

On the web, the most common way to save data is in a database hidden behind a server. This makes interoperability an explicit feature that must be implemented through APIs. The default is for web apps not to interoperate.

Unlike the web, the classic desktop computing paradigm makes a distinction between apps and files. The default way for a desktop app to save data is to save it to an external file.

Files act as hubs around which interoperability can emerge. Rather than implementing each other’s bespoke APIs, apps can collaborate over a shared file type, cutting down the necessary integrations from n * (n-1), to just n. Take a .png. How many apps can work with a .png? Too many to count, and it’s an open set. Any app can opt in to working with .pngs. Workflows that span multiple apps can emerge organically and permissionlessly.

Files allow interoperability to emerge retroactively. New apps can come along and implement the file formats of other popular apps, uplifting their file format into a de facto protocol. This has happened many times, from .doc, to .rtf, to .psd. Competing products are able to get off the ground by interoperating with incumbents. New workflows can be created permissionlessly.

Files mean the OS can guarantee basic rights for your data. On the web, “data” is a miasma, invisibly collected, transferred, leaked, and shared. In the desktop paradigm, “data” is a document, a physical object you can manipulate. All documents can be moved, copied, shared or deleted, whether or not an app implements these features.

A lot can be learned from the design of files.

Where do protocols tend to emerge?

So, interoperability is an emergent outcome. It doesn't exist in any one mechanism or standard, but rather emerges from the interplay between the actors in a system, and the structure of a system. Some system designs are more conducive to the emergence of interoperability than others. Files are one such example. Yet, even system designs which favor interoperability are not guaranteed to produce it. The actors in a system have their own goals.

Commercial software, especially aggregators, are incentivized to resist interoperability. To be composable is to be commoditized. Interoperability means you no longer have a data lock-in moat, or a privileged hub position in the network.

Yet protocols do successfully emerge:

Communication: TCP/IP HTTP, IMAP, IPFS, BitTorrent…

Music: MP3, WAV, FLAC…

3D: GLTF, USD, COLLADA…

Images and photos: .tiff, .png, .jpg, .gif…

Fonts: .ttf, .otf, .woff…

Publishing: HTML, RSS/Podcasting, sitemap.xml, schema.org…

Code: plain text files

How and where these protocols emerge is contingent on network effects, incentives, path dependency, and other strategic factors, but I think I see some themes.

The early open networks: TCP/IP, HTTP, IMAP. These often came out of government-funded labs that weren’t bound by profit-seeking incentives. These protocols attempted to solve practical problems with layerable APIs that could build on each other, and support an open-ended set of use-cases.

Low-level primitives: images, fonts, sound. Some mix of licensing models, patent pools, and open standards. These protocols are so elemental that perhaps the value of a standard is too vast to pass up.

Commercial exchange: Schema.org, sitemap.xml. Protocols in this zone emerge to commoditize their compliments.

All else being equal, demand for a product increases when the prices of its complements decrease.

Joel Spolsky, 2002, on Commoditizing your compliments.

If your game is search, your compliment is useful data, so you create standards which make that data legible. Adoption of these standards reflects a power relationship in the ecosystem.

Cryptocurrency has provoked an influx of speculative capital, with an unfamiliar set of incentives that are generating new kinds of open-source infrastructure. If you squint, it looks like we might be seeing the bubble phase of Carlotta Perez’ Technological Revolutions and Financial Capital. To follow Perez, the influx of capital during the bubble phase fuels the creation of new general-purpose infrastructure. The bubble then bursts, but the infrastructure it leaves behind becomes the basis for the next technological paradigm. The question is, what kind of infrastructure does cryptocurrency leave behind?

Professional creative workflows: game development, 3D, music, video production, tools for thought... The design space of some creative work is so vast that no one tool can embody the entire discipline.

New tools might be invented which create whole new disciplines, like Houdini for procedural effects. Workflows are cobbled together across multiple apps, either by coopting existing formats, or creating new standards.

To some degree, creative work means piecing together unique workflows using many different tools. The degree to which a workflow has been embodied in a single tool is the degree to which that creative act has been commoditized.

Protocols produce creative combinatorial explosions

The notion behind tools for thought is that we can increase our creative range of motion through the use of tools. So, what concept shifts might widen the potential of our creative tools?

When you have a universal API for composition each additional tool increases the number of possible workflow combinations by n * (n - 1).

n * (n-1). That’s our directional graph equation again, but this time the network effect is on our side. The more tools, the more possibilities. These possibilities increase super-linearly with the number of tools in the ecosystem.

The Unix Philosophy got this right from the beginning:

Expect the output of every program to become the input to another, as yet unknown, program.

Unix Philosophy, Bell System Technical Journal, Jul-Aug 1978

If a tool supports composition with other tools, it supports open-ended evolution. At that point, all of the other ways in which it might be terrible become incidental, because an evolutionary system will always be more expressive than one that isn’t.

Nothing else can widen the potential of creative tools as rapidly as composability with other tools. It's not even close.